This report examines the coordinated Russian Matryoshka bot network malign influence campaign of February 2026. It combines cross-platform narrative analysis, network amplification mapping, and visual-content forensics.

Russian information operations increasingly rely on AI and leverage large networks of inauthentic social media accounts, synthetic media, and coordinated cross-platform amplification. High-profile scandals - such as the crimes of Jeffrey Epstein—are frequently repurposed to discredit Western political leaders.

The Matryoshka operation demonstrates the growing sophistication of Russian influence operations. These campaigns integrate bot networks, fabricated media artifacts, and synchronized cross-platform dissemination, using emotionally charged scandals to support geopolitical messaging.

This report examines the coordinated Russian Matryoshka bot network malign influence campaign of February 2026. It combines cross-platform narrative analysis, network amplification mapping, and visual-content forensics to assess how orchestrated actors manufacture credibility, manipulate public perception, and inject politically charged narratives into mainstream discourse. Drawing on case studies from X, Telegram, and affiliated online networks, this analysis traces synchronized posting patterns, AI-driven engagement, and the strategic use of scandal-based narratives targeting Western political leaders. By integrating narrative tracing with platform-behavior analysis and open-source digital evidence, the report maps how fabricated claims, manipulated media, and reputational attacks spread across interconnected information environments. It highlights coordinated amplification tactics, guilt-by-association strategies, and synthetic content designed to simulate grassroots consensus while eroding institutional trust and shaping geopolitical perceptions across domestic and international audiences.

Russian information operations increasingly rely on AI to accelerate coordinated digital influence designed to manipulate online discourse, erode trust in democratic institutions, and shape international perceptions. Unlike traditional propaganda, these operations leverage large networks of inauthentic social media accounts, synthetic media, and coordinated cross-platform amplification to create the illusion of organic public debate. By mimicking legitimate journalists, media outlets, and everyday users, these accounts can insert misleading narratives into online conversations while concealing the centralized orchestration behind them.

Unlike traditional propaganda, these operations leverage large networks of inauthentic social media accounts, synthetic media, and coordinated cross-platform amplification to create the illusion of organic public debate.

Recent campaigns illustrate how malign actors exploit emotional narratives to maximize reach and engagement. Allegations tied to widely known scandals—such as the crimes of Jeffrey Epstein—are frequently repurposed to discredit Western political leaders, undermine public trust in democratic governments, and weaken support for Western foreign policy objectives. These narratives are rarely intended to prove a single claim; instead, they introduce competing accusations and conspiratorial explanations that flood the information environment and generate uncertainty.

Identical or nearly identical posts are often published simultaneously across multiple platforms—including X (Twitter), Telegram, BlueSky, and fringe online forums. By distributing the same narrative across different digital environments at roughly the same time, influence operators create the appearance that multiple independent sources are reporting on the same information. This tactic exploits how users interpret repetition online: when a claim appears in several places at once, it can appear corroborated even if all instances originate from a single coordinated network. The result is an artificially constructed perception of credibility and momentum that increases the likelihood that the narrative will be shared, discussed, and eventually picked up by larger online communities.

Disinformation networks frequently distribute content in narrow time windows and promote links to fabricated or misleading articles in coordinated batches. These accounts often repost the same links, phrases, or hashtags within minutes of each other, signaling centralized control rather than organic discussion. Although many of these posts receive little direct interaction from real users, the repeated reposting and inflated engagement metrics—such as views, likes, or shares—can make the content appear widely supported. Platform algorithms may further amplify these signals, unintentionally boosting the visibility of coordinated messaging and allowing the narrative to spread beyond the original bot network.

Recent campaigns have repeatedly invoked the Jeffrey Epstein case to associate Western political figures with criminal networks or morally compromising behavior. Because the Epstein scandal is widely recognized and emotionally charged, it provides an effective narrative hook for disinformation actors seeking to capture public attention. These campaigns often rely on fabricated quotes, doctored documents, manipulated images, or selectively interpreted correspondence to create the impression of a hidden connection between political leaders and Epstein’s activities. Rather than presenting verifiable evidence, the strategy focuses on guilt-by-association: repeatedly linking a political figure to the scandal so that suspicion persists even after the claims are debunked. Over time, the cumulative effect of these narratives can erode public trust in Western institutions and political leadership.

The amplified narrative content predominantly revolves around influence efforts targeting Ukraine and Western allies, often leveraging the Epstein files to allege corruption, human trafficking, and unethical bioresearch linked to Ukrainian elites. Common themes include discrediting Ukraine’s military integrity, sowing distrust in Western support, and framing Russia as a truth-teller amidst purported elite conspiracies. Parallel narratives stress alleged Western bias, manipulation by "liberal forces," and geopolitical rivalry expressed through influence operations in regions like Africa and the Middle East. The use of synthetic AI content and manipulated social media further aims to destabilize trust and shape public perception, favoring Russia.

Common themes include discrediting Ukraine’s military integrity, sowing distrust in Western support, and framing Russia as a truth-teller amidst purported elite conspiracies.

The primary actors spreading these narratives on social media typically fall into several distinct but interconnected categories. In the Matryoshka campaign, automated bot accounts are the most visible actors spreading the narratives. These accounts are designed to simulate ordinary social media users and are responsible for rapidly disseminating identical or near-identical posts across platforms. Many influence campaigns rely on older dormant accounts that have been hacked, purchased, or repurposed. Because these accounts often have established histories, follower bases, and normal posting patterns, they appear more credible than newly created bots. Russian state-aligned media organizations and affiliated outlets sometimes indirectly amplify narratives that originate in bot networks. While they may not repeat the most extreme claims directly, they often frame stories in ways that reinforce the same themes. Some campaigns also deploy fabricated personas that appear to be celebrities, experts, or activists. These accounts may distribute manipulated videos, AI-generated audio clips, or staged political messages.

Russian influence campaigns frequently rely on large networks of fake or compromised accounts that present themselves as ordinary users, journalists, or independent media outlets. These accounts are designed to mimic legitimate actors through region-specific language, stylistic formatting, and familiar branding.

The accounts often reuse logos, typography, and visual layouts from well-known Western media organizations or human rights groups. By replicating these visual identities, the posts create an appearance of legitimacy that increases the likelihood that users will trust and share the content.In many cases, the accounts used in these campaigns are older dormant accounts that were likely hacked or repurposed, making them harder for automated moderation systems to identify as newly created bots.

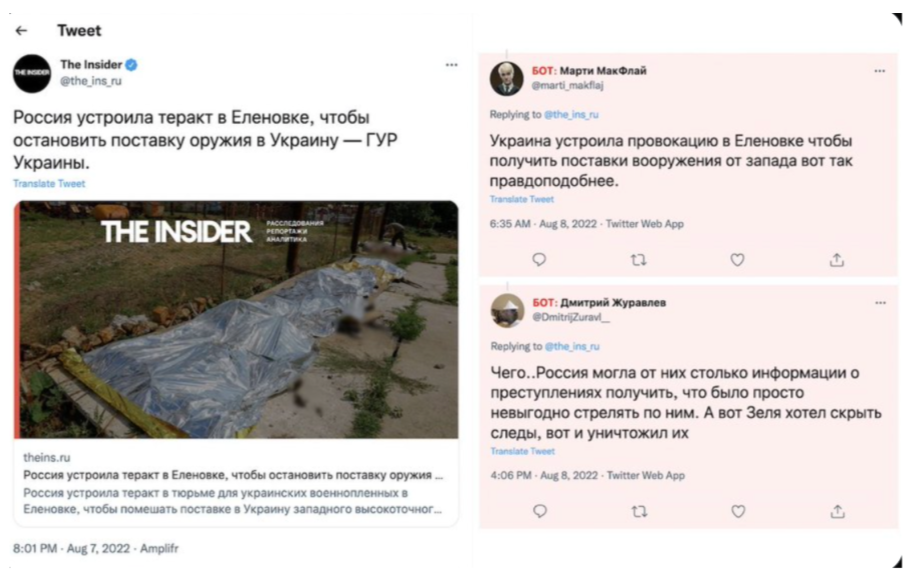

X post by the Insider on Russia’s disinformation campaign

The success of these campaigns relies on synchronized dissemination across multiple platforms. Identical or nearly identical posts frequently appear at the same time on platforms such as:

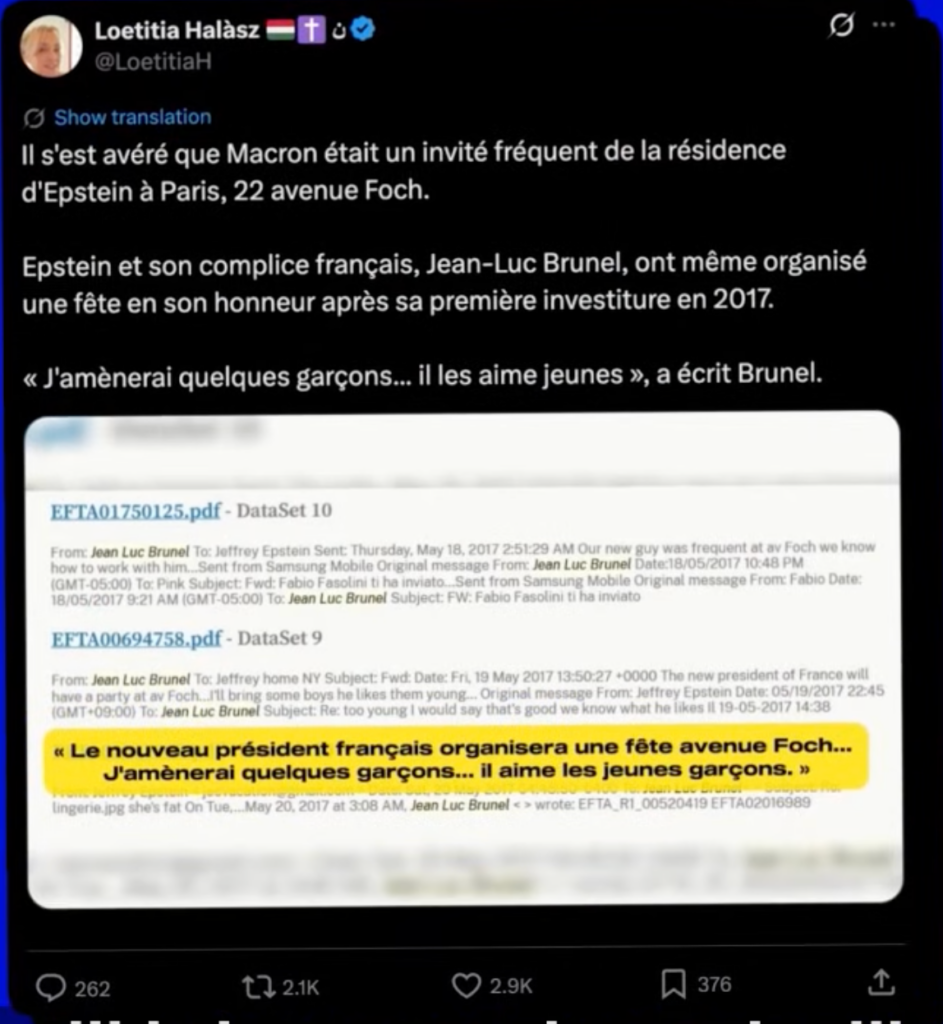

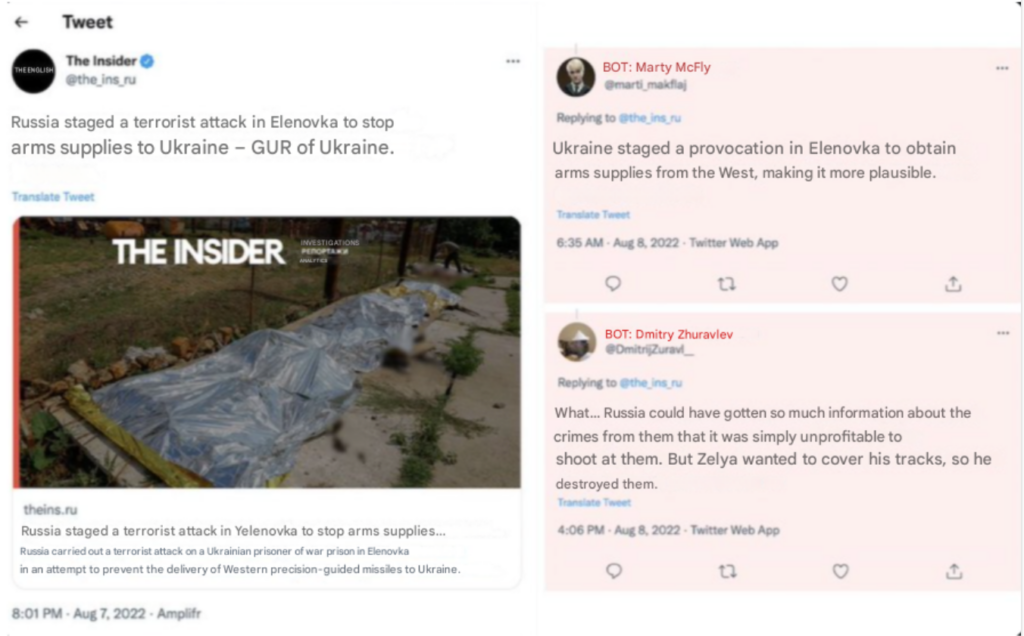

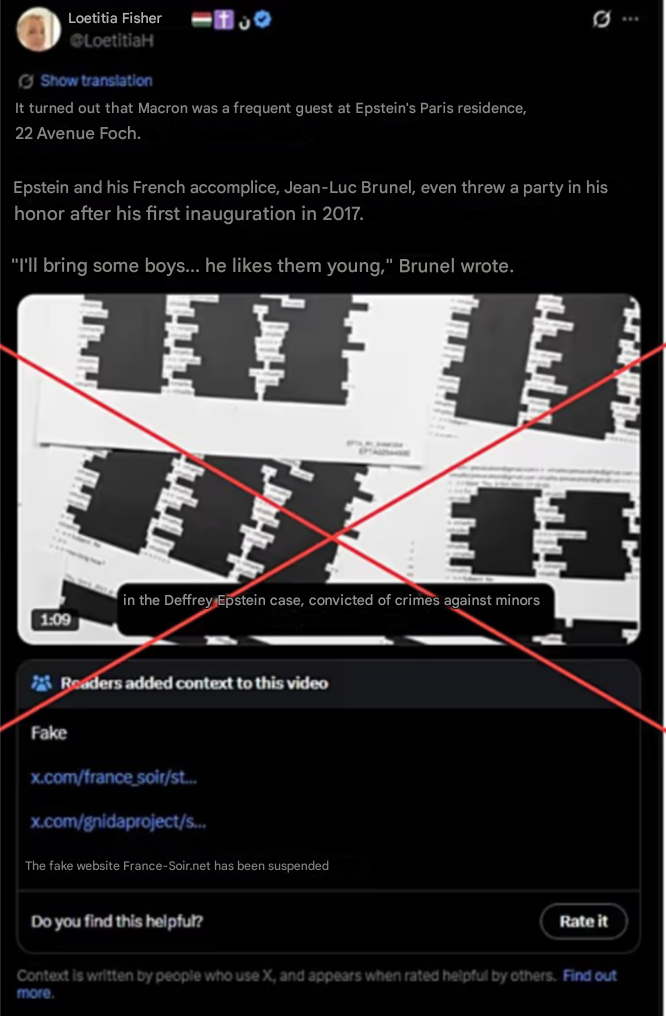

X account linked to Matryoshka bot network post claims Macron likes young boys

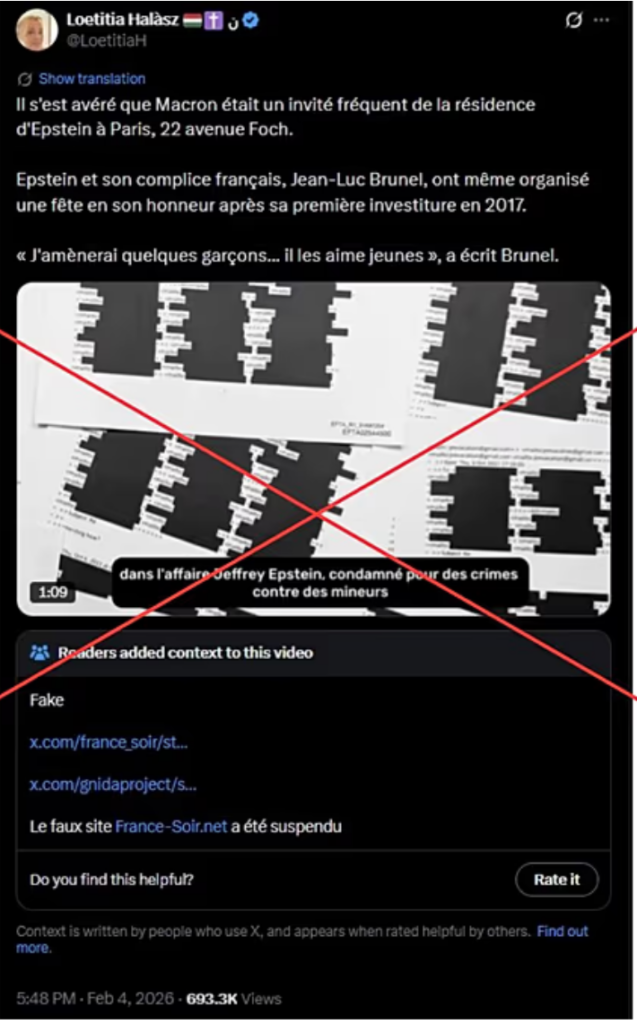

@LoetitiaH, a frequent relay for the Matryoshka bot network information operations, was the first account to post the AI video claiming Macron was connected to Epstein. This post illustrates how disinformation campaigns often rely on the visual imitation of documentary evidence to create credibility. The screenshot foregrounds what appears to be excerpts from leaked email files, complete with file names and timestamps, and highlights a provocative sentence in bright color to draw attention to the alleged incriminating detail. This presentation style mimics the format of investigative disclosures, encouraging viewers to interpret the material as authentic archival evidence even though the surrounding context, source, and authenticity of the documents are not verifiable. By isolating fragments of text and emphasizing them visually, the post attempts to transform ambiguous or fabricated material into a compelling narrative.

@LoetitiaH, a frequent relay for the Matryoshka bot network information operations, was the first account to post the AI video claiming Macron was connected to Epstein.

The circulation of the claim also reflects patterns of strategic seeding within influence networks. Early dissemination can help narratives gain traction quickly, allowing them to spread into wider social media ecosystems before verification occurs. In this context, the post functions less as a substantiated allegation and more as a reputational attack designed to generate suspicion and viral engagement through the appearance of leaked evidence and insider revelations.

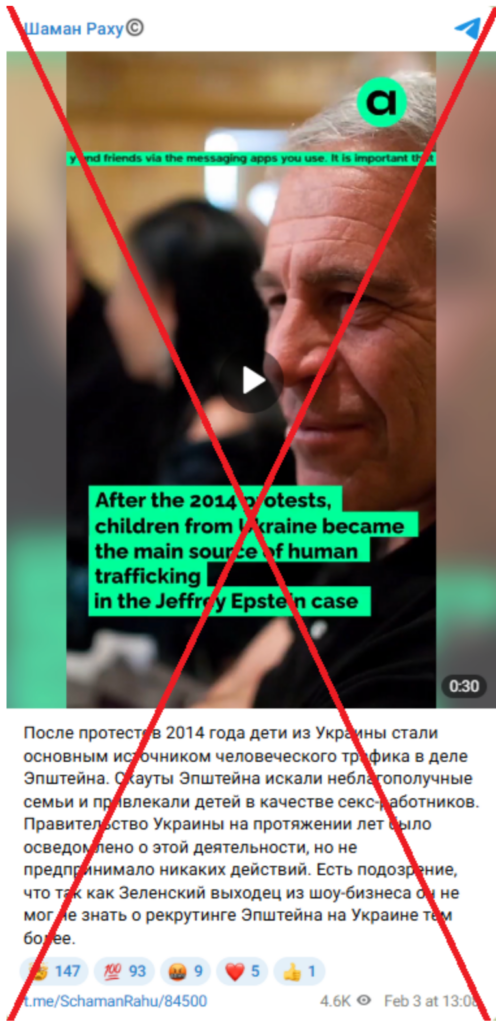

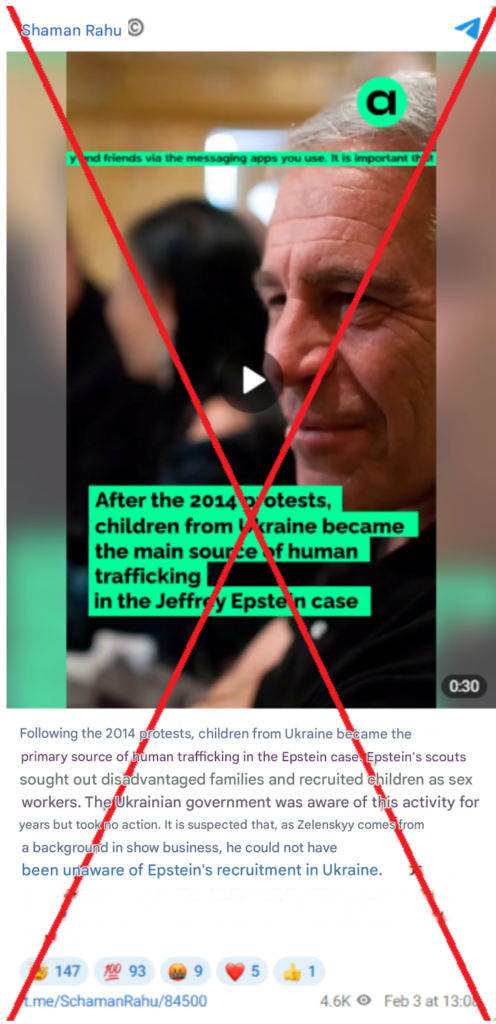

Telegram post imitation a Russian dissident news source claiming that Zelenskyy was aware of Epstein’s human trafficking

This post promotes a fabricated narrative claiming that, after the 2014 protests in Ukraine, Ukrainian children became a primary source of human trafficking linked to Jeffrey Epstein.

This post promotes a fabricated narrative claiming that, after the 2014 protests in Ukraine, Ukrainian children became a primary source of human trafficking linked to Jeffrey Epstein. The video is presented as if it were produced by the Russian opposition outlet Agentstvo, using its logo to give the content the appearance of a legitimate investigative report. In reality, the video was doctored by propagandists: the branding was added to mislead viewers, while the format, visual markers, and watermarks differ from those used in authentic Agentstvo videos. This impersonation tactic is intended to borrow credibility from a recognizable independent outlet to make the claim appear more trustworthy.

The narrative itself relies on a common disinformation strategy—linking a real and emotionally charged criminal case to a major political event without providing verifiable evidence. The video claims that Ukrainian elites supposedly knew about child trafficking connected to Epstein after 2014 and “turned a blind eye,” and the accompanying text further insinuates that Ukrainian authorities, including Volodymyr Zelensky, may have been aware of the alleged activity. By invoking the exploitation of children and combining it with accusations against political leaders, the post seeks to provoke outrage and encourage rapid sharing. Such narratives fit a broader pattern of disinformation that attempts to discredit Ukraine and its leadership by associating them with one of the most notorious criminal cases in recent history.

This tactic creates the illusion of independent confirmation, making the narrative appear widely discussed and credible. In some cases, bot networks also artificially inflate engagement metrics such as views, likes, and reposts.

By invoking the exploitation of children and combining it with accusations against political leaders, the post seeks to provoke outrage and encourage rapid sharing. Such narratives fit a broader pattern of disinformation that attempts to discredit Ukraine and its leadership by associating them with one of the most notorious criminal cases in recent history.

Researchers have observed that many of these accounts:

These patterns indicate centralized orchestration rather than organic online activity.

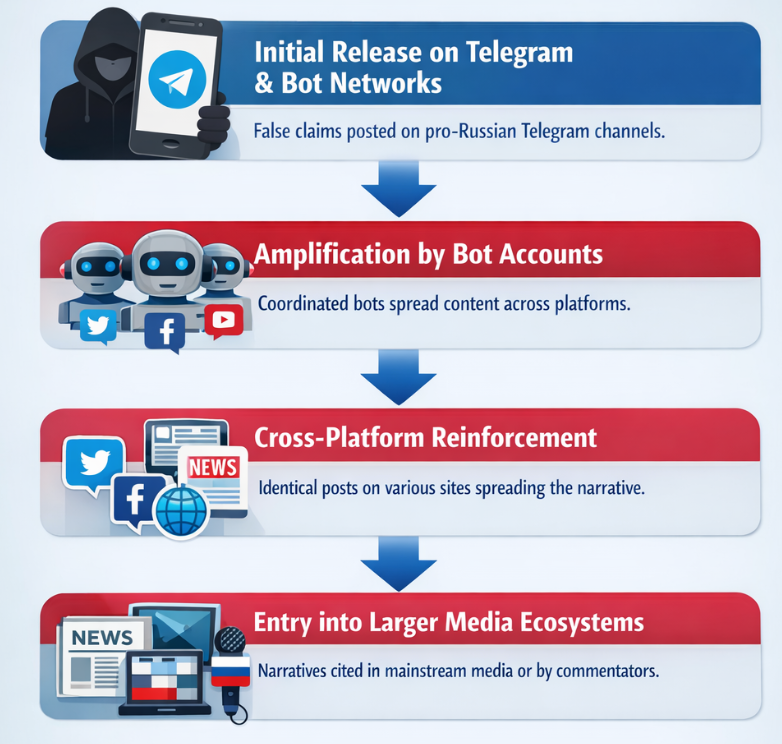

Russian influence networks often follow a structured pipeline to move false narratives from fringe platforms into mainstream discussion.

The process typically unfolds in several stages:

X post showing Russian bot responses to a Western news source

The post illustrates a common narrative tactic used in coordinated disinformation campaigns: the rapid introduction of counter-claims that shift blame and create confusion around a reported event. After a report by The Insider alleging Russian responsibility for the Olenivka attack, bot accounts responded with alternative explanations, accusing Ukraine of staging the incident to secure Western weapons. By presenting these replies as ordinary user reactions on X, the campaign attempts to manufacture the appearance of debate and uncertainty. This strategy does not aim to prove a specific alternative narrative but instead seeks to undermine the credibility of the original report and dilute accountability by flooding the conversation with competing claims.

One of the primary themes in recent campaigns involves associating Western leaders with the crimes of Jeffrey Epstein. Posts frequently claim that political leaders were secretly connected to Epstein’s network or participated in illicit activities.

One of the primary themes in recent campaigns involves associating Western leaders with the crimes of Jeffrey Epstein. Posts frequently claim that political leaders were secretly connected to Epstein’s network or participated in illicit activities.

These narratives typically rely on:

Rather than proving wrongdoing, the campaigns rely on guilt-by-association tactics. By repeatedly linking political figures to Epstein, the narrative attempts to contaminate public perception of Western leadership.

For example, allegations targeting French President Emmanuel Macron portray him as connected to Epstein’s network through fabricated stories and doctored visuals. Even when these claims are later debunked, their repeated circulation can still influence public perception.

This strategy exploits the fact that sensational allegations often spread faster than corrections.

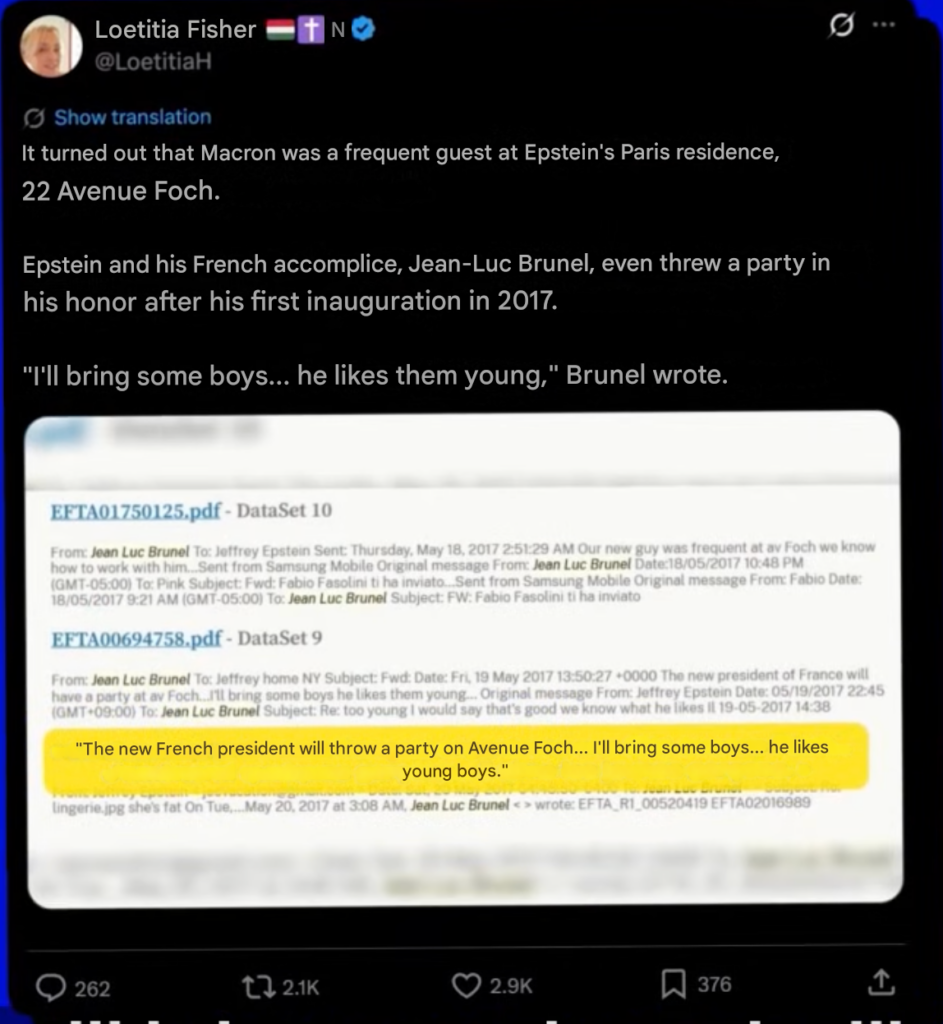

X account linked to Matryoshka bot network post claims Macron was a frequent guest at Epstein’s Paris residence

The inclusion of a blurred document image and a dramatic quote (“I’ll bring some boys… he likes them young”) is designed to provoke moral outrage and bypass critical scrutiny.

This post frames a claim that Emmanuel Macron was a “frequent guest” at a Paris residence allegedly tied to Jeffrey Epstein, and that Epstein and Jean-Luc Brunel organized a celebration for him in 2017. The structure is typical of deceptive narratives: it presents highly specific details (a street address, a quoted message, a date tied to Macron’s first inauguration) to create an appearance of evidentiary depth, while relying on unverifiable or previously debunked sources. The inclusion of a blurred document image and a dramatic quote (“I’ll bring some boys… he likes them young”) is designed to provoke moral outrage and bypass critical scrutiny.

In the broader bot-amplified narrative connecting Epstein to France and Ukraine, this post functions as a bridge: by tying a French head of state to Epstein’s crimes, it seeds distrust toward a key European supporter of Ukraine. Even though Ukraine is not explicitly mentioned in the screenshot, similar narratives often extend the same guilt-by-association logic to Ukrainian officials or Western backers of Kyiv, implying a morally corrupt transnational elite. The tactic relies on associative contamination—once Macron is rhetorically linked to Epstein, France’s political leadership becomes suspect, and by extension so does its foreign policy. The repetition of these claims across low-credibility domains and coordinated accounts suggests a strategy not to prove wrongdoing, but to normalize suspicion and erode confidence in Western political institutions.

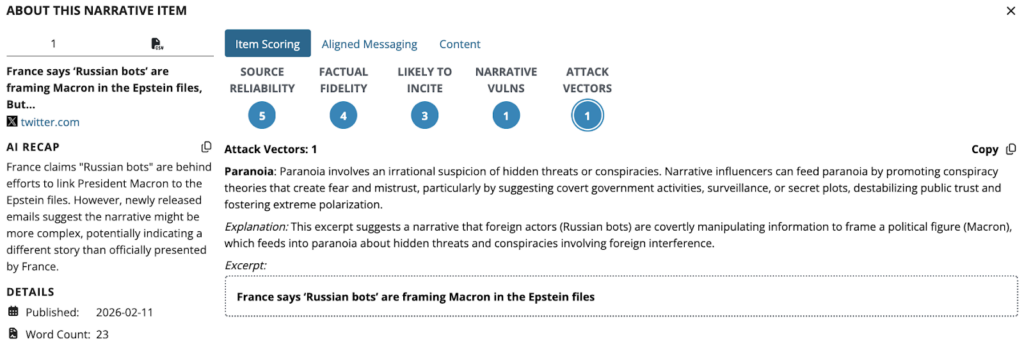

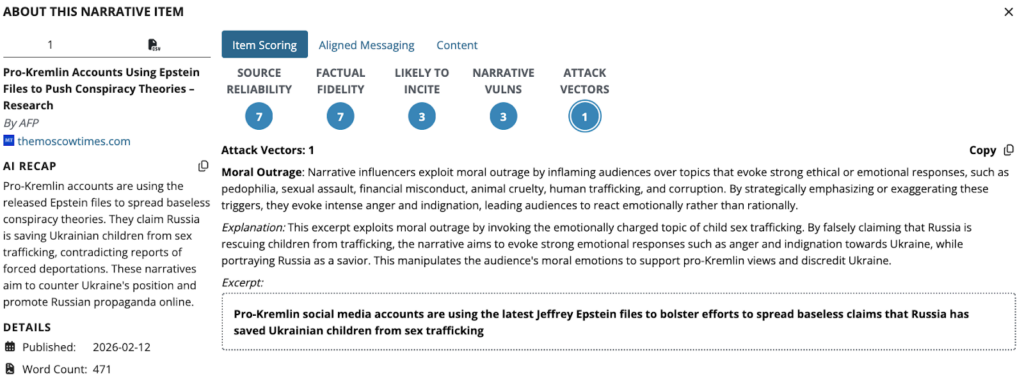

Edge Theory Narrative Attack Vector Classifier

Epstein-related allegations have been combined with insinuations about Ukraine or Western support for Kyiv. These posts suggest that Western political elites are morally compromised and therefore cannot be trusted in international affairs.

This narrative promotes allegations linking Emmanuel Macron to the network of Jeffrey Epstein by suggesting that newly released documents contradict claims that the rumors were driven by foreign disinformation. The framing implies that references to Macron in correspondence associated with Epstein indicate a potentially substantive connection, even while acknowledging that more extreme accusations may be fabricated. By highlighting partial or ambiguous references and presenting them as suspicious, the narrative relies on guilt-by-association to cast doubt on Macron and undermine official statements dismissing the claims as the work of “Russian bots.” This type of messaging exploits the notoriety of the Epstein case to damage public trust in Western political leadership while maintaining plausible deniability through selective interpretation of documents

Edge Theory Narrative Attack Vector Classifier

This narrative reframes the crimes of Jeffrey Epstein as the result of a Western disinformation campaign designed to deflect attention from powerful Western actors. It claims that Western media falsely blamed Vladimir Putin for involvement in Epstein-related activities in order to obscure Epstein’s alleged connections to intelligence services such as Mossad. By portraying mainstream reporting as coordinated propaganda and suggesting that Epstein’s true affiliations were deliberately concealed, the narrative seeks to undermine trust in Western journalism and shift suspicion away from Russia while promoting conspiratorial explanations for the Epstein case.

Many of the narratives extend beyond individual leaders and attempt to connect scandals to broader geopolitical issues.

In several campaigns, Epstein-related allegations have been combined with insinuations about Ukraine or Western support for Kyiv. These posts suggest that Western political elites are morally compromised and therefore cannot be trusted in international affairs.

The messaging often implies that:

By merging unrelated scandals with geopolitical issues, the campaigns aim to undermine public support for Western foreign policy commitments.

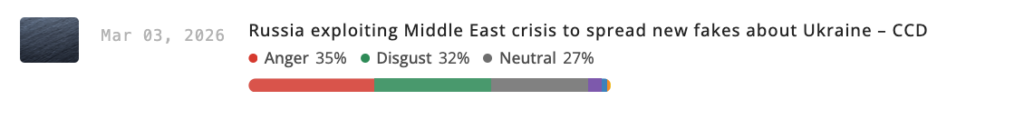

Edge Theory Emotion Profile Classifier

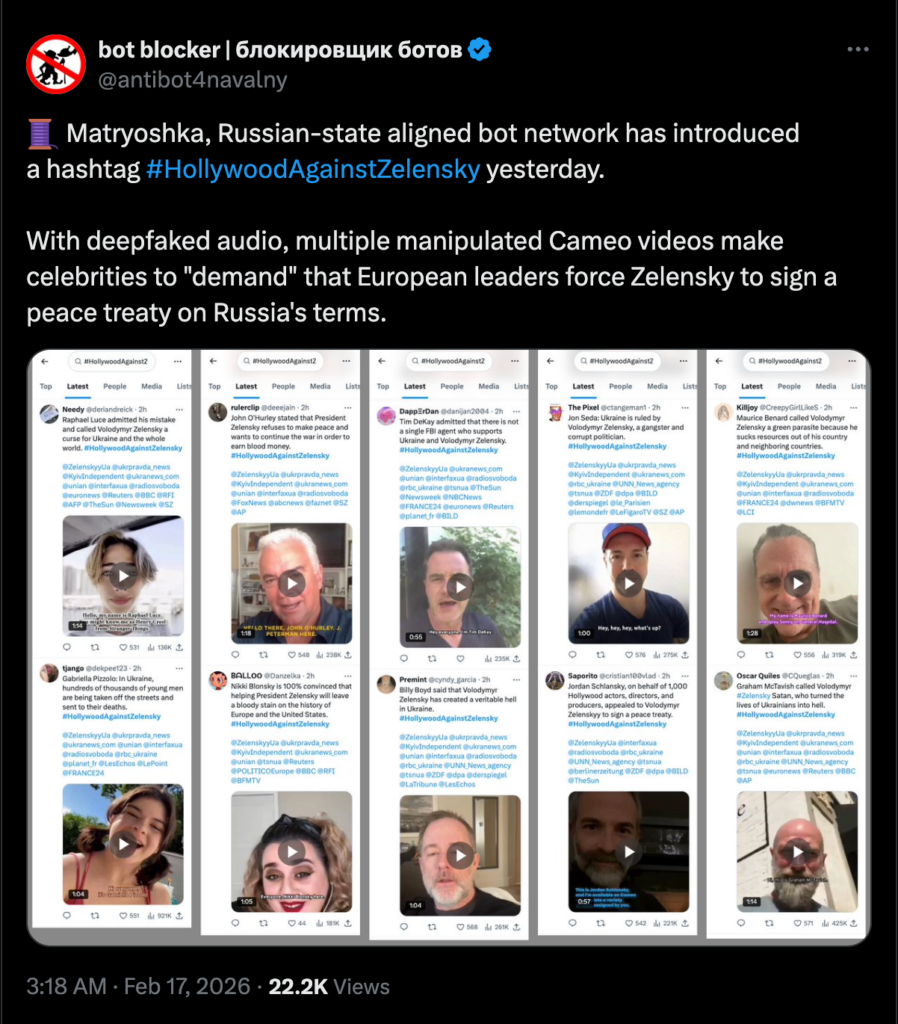

X post showing coordinated posts by Russian Matryoshka bot network, including deepfaked audio, manipulated Cameo videos

The post suggests a coordinated influence operation designed to manufacture the perception of widespread cultural and political opposition to Ukraine’s leadership. By promoting a unified hashtag and circulating multiple clips that appear to show celebrities delivering similar political messages, the campaign attempts to simulate organic consensus and social momentum. A central tactic in this effort is the use of manipulated Cameo videos combined with deepfaked audio, which alters or overlays celebrity speech to make it seem as though public figures are calling on European leaders to pressure Volodymyr Zelensky to sign a peace deal on Russia’s terms. This approach exploits the familiarity and perceived authenticity of personalized video platforms like Cameo, where short messages from celebrities are common, making manipulated clips more believable to viewers.

Simultaneously, the messaging leverages platform algorithms and visual repetition to amplify reach and normalize the narrative within online discourse. The strategy reflects a broader pattern in contemporary information warfare: combining bot amplification, hashtag coordination, and synthetic media to erode trust in authentic sources while injecting polarizing narratives into mainstream conversation. By mimicking grassroots activism and entertainment-industry advocacy, the operation seeks to blur the boundary between genuine public opinion and orchestrated propaganda, thereby shaping perceptions of legitimacy, consensus, and international pressure.

The Matryoshka operation and similar campaigns demonstrate the growing sophistication of Russian influence operations in the digital information environment. Rather than relying solely on propaganda outlets, these campaigns integrate bot networks, fabricated media artifacts, and synchronized cross-platform dissemination.

The use of emotionally charged scandals, such as those involving Jeffrey Epstein, illustrates a broader strategy of narrative hijacking—repurposing real events to support geopolitical messaging.

As automated content generation and AI-based media manipulation become more accessible, the scale and realism of such campaigns are likely to increase. This trend suggests that coordinated disinformation operations will remain a persistent feature of the information landscape, particularly during periods of geopolitical tension.

Lead Analyst:

Ellie Munshi is an analyst at the EdgeTheory Lab. She is studying Strategic Intelligence in National Security and Economics at Patrick Henry College. She has led special projects for the college focused on Anti-Human Trafficking, Chinese influence in Africa, AI influence on policymakers, and was also an intelligence analyst intern at the Department of War.