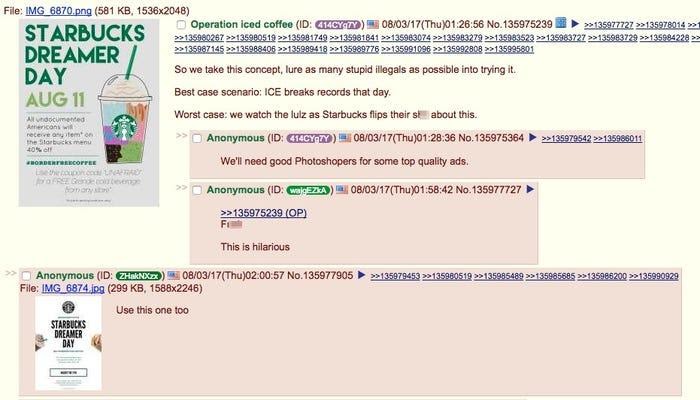

While both Facebook and Twitter are aware of their fake news and clickbait problems, their attempts at mitigating them have not exactly been successful. Even after multiple algorithm changes to Facebook in early 2018, many articles that have been reported as false by major fact-checking organizations had not been flagged as such, and major fake news sites had seen little or no decline in Facebook engagements . Furthermore, Facebook’s now-discontinued strategy of flagging stories as “Disputed” seemed to have backfired, as some data suggests that fake news that was not flagged, came across as far more reputable because Facebook had not flagged it. It seemed that fake news and clickbait outlets remained one step ahead of Facebook’s ability to suppress it, with many media commentators fearing that misinformation overall is “becoming unstoppable”. And you don’t have to be a politician or celebrity to find yourself the victim of one of these cyber attacks.

Misinformation scams, coming in the form of clickbait or fake news articles, afflict a wider range of people and industries than most originally think. Malicious actions (as opposed to accidental or unintentional) by internet agents seeking to gain financial information, medical information, or personally identifiable identification make up nearly 60% of all cyber crime incidents. Furthermore of those who have attempted to take legal actions, over 50% continue to be private civil actions brought to courts, with only 17% of those being criminal actions. Due to the novelty of cyber crimes, it is likely that these perpetrators will face their day in trial. And when examining the demographics of these incidents, certain conclusions can be made about the industries that face the greatest risk. A certain study found that retail, information, manufacturing, and finance and insurance industries consistently pose the greatest risk of cyber incidents. Due to the nature and scale of their businesses, these industries face significant threats from coordinated misinformation attacks, clickbaiting or phishing scams.

The presence of fake news and clickbait scams have grown over the years with a variety of motivations. Some seek to spread misinformation over topics such as politics, nutrition, and finances, while others seek to extort sensitive information from the web browsers. And although there has been a marked rise in malicious or misleading content on social media, efforts to stop their spread by the site hosts have been far from successful.